The market is currently drunk on the idea that a "stamp of approval" from an AI lab like Anthropic validates a cybersecurity firm’s long-term dominance. It’s a seductive narrative. Large Language Models (LLMs) need security; security firms need AI. Wall Street sees the partnership, checks the box, and sends the stock price into the stratosphere.

They are dead wrong. Read more on a related subject: this related article.

What the "stamp of approval" actually represents is a desperate outsourcing of risk. When a titan like Anthropic or OpenAI integrates with a legacy cyber stock, they aren't crowning a king. They are identifying a temporary patch for a structural flaw in their own rapidly evolving architecture. Investors buying the hype are mistaking a vendor agreement for a moat.

The Mirage of the AI Integration

The current consensus suggests that because AI companies are partnering with big-name cyber firms to protect their weights, data, and inference pipelines, those cyber firms are now "AI-proof." Further analysis by The Next Web explores comparable views on this issue.

I’ve spent fifteen years watching legacy tech vendors slap new stickers on old boxes. This is "Cloud-Native" all over again, but with higher stakes and faster depreciation. The "lazy consensus" assumes that the defensive perimeter around an AI model is a static problem that companies like CrowdStrike or Palo Alto Networks can solve with their existing toolkits.

It isn’t.

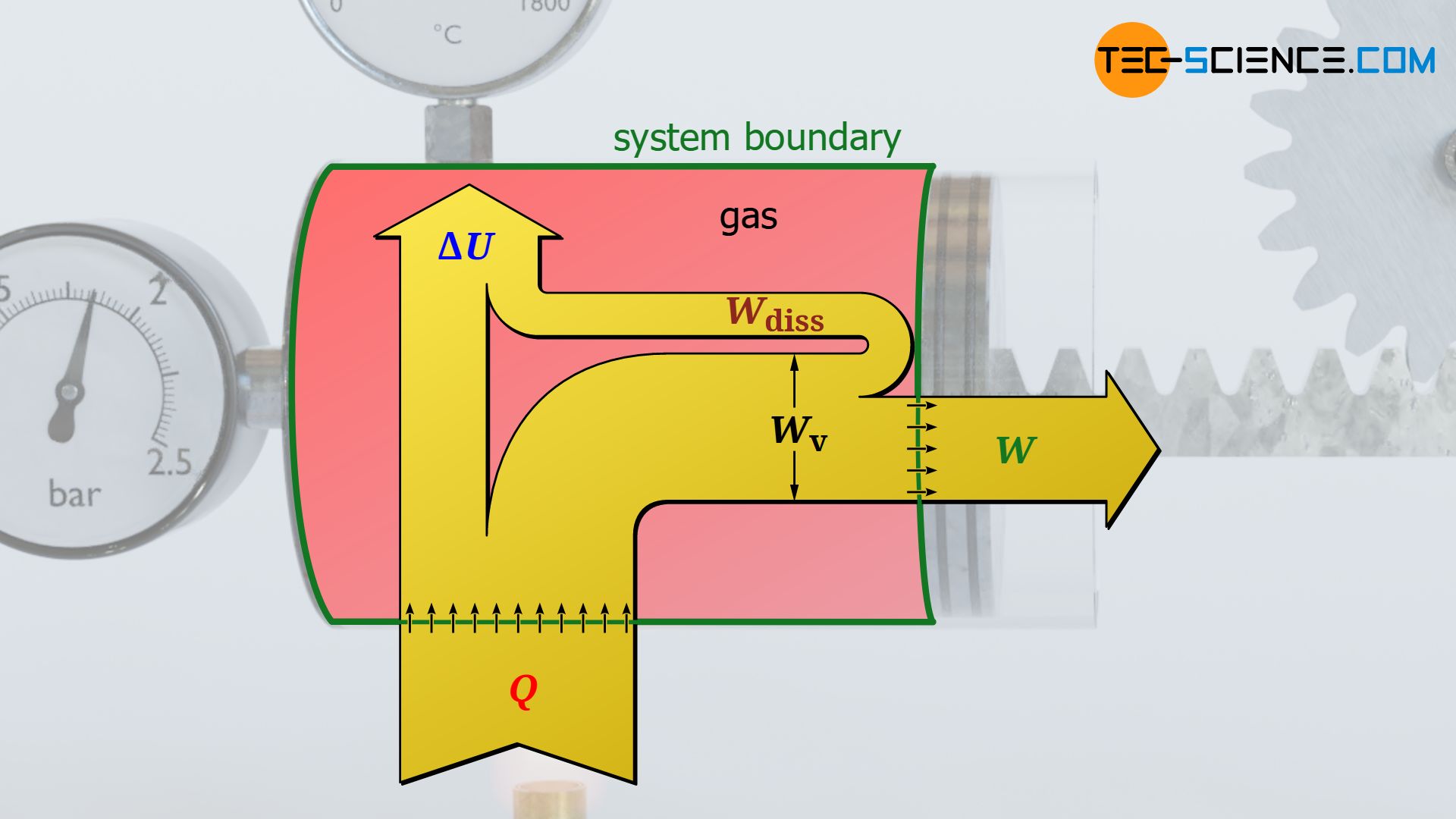

The threat model for an LLM is fundamentally different from a traditional database or endpoint. We are moving from a world of Deterministic Security—where $A$ leads to $B$ and a rule stops $C$—to Probabilistic Security.

In traditional systems, code is data and data is code, but they are separated by strict execution boundaries. In an LLM, the prompt (data) is the instruction (code). You cannot "firewall" a prompt without neutering the model's utility. When Anthropic "approves" a cyber vendor, they are usually just buying a glorified logging tool while they figure out how to build internal, native defenses that will eventually make that third-party vendor obsolete.

Why Legacy Cyber Stocks Are the New Technical Debt

Cybersecurity has always been a game of "bolting things on." You have a network; you bolt on a firewall. You have a laptop; you bolt on an EDR (Endpoint Detection and Response) agent.

The market thinks these "big tech names" are the winners because they have the largest sales forces and the most integrations. But in the AI era, integration is an anchor.

Every legacy cyber giant is currently trying to pivot their monolithic, signature-based engines to handle the fluid, non-linear threats of AI. They are fighting the last war. They are trying to apply $1.0$ logic to a $3.0$ problem.

- Latency is the Killer: If a security layer adds 200ms of latency to an LLM response because it has to ship the prompt to a cloud sandbox for "AI-driven analysis," the user experience dies.

- The Context Window Problem: Legacy security tools don't understand context. They look for patterns. But an AI attack (like a prompt injection) often looks exactly like a legitimate query until the 10,000th token.

- The Talent Gap: The people who understand how to break a transformer model aren't working at 30-year-old firewall companies. They are at the labs, or they are starting the very companies that will disrupt the "big tech names" currently enjoying that AI bump.

Thought Experiment: The Invisible Firewall

Imagine a scenario where the "security" of an AI isn't a separate product you buy. Instead, it’s a set of weights within the model itself—a "Safety LoRA" (Low-Rank Adaptation) that filters intent at the neuron level during inference.

In this world, what happens to the $20 billion market cap of a company that sells external "AI Gateways"? It vanishes.

The "stamp of approval" isn't a partnership; it's a research phase. Anthropic and its peers are using these cyber stocks as a buffer while they perfect Self-Securing AI. Once the model can identify its own adversarial inputs with 99.9% accuracy, the "Cyber Stocks" become redundant overhead.

The Real Winners Aren't on Your Watchlist

If you want to find the real value, stop looking at the companies getting "stamps of approval" and start looking at the companies that make the stamps unnecessary.

The industry is currently obsessed with "AI Security" as a category. It’s the wrong category. The real movement is Data Provenance and Compute Integrity.

- Confidential Computing: Instead of trusting a software agent from a big-name cyber firm, we are moving toward hardware-level encryption where the model weights are never decrypted in system memory.

- Adversarial Training: Why pay a subscription for a "shield" when you can train the model to be inherently resistant to jailbreaking?

The "big tech names" mentioned in these breathless analyst reports are often the ones with the most to lose. They are the incumbents whose high margins depend on the complexity of yesterday's problems. AI simplifies the attack surface in some ways while exploding it in others, but it almost always favors the native over the attached.

Dismantling the "People Also Ask" Delusions

You’ll see investors asking: "Which cyber stock has the most AI patents?"

This is a loser’s question. Patents in a field moving this fast are just expensive wallpaper. The real question is: "Which company has the lowest latency in their inference-time filtering?" Most "big tech" names won't even give you that number because it's embarrassing.

Another common query: "Is AI making hacking easier?"

Yes, but that's not the point. AI is making automated defense a commodity. When defense becomes a commodity feature of the operating system or the model itself, the premium "Cyber Stock" business model collapses.

The Downside of My Argument

To be fair, the transition to self-securing models won't happen overnight. There is a "Gap of Incompetence" that will last 3 to 5 years. During this window, legacy cyber firms will print money by selling "AI Protection" packages that are mostly marketing and slightly improved regex filters.

If you are a swing trader, follow the hype. If you are an investor, realize that you are buying the top of a cycle for a product category that is being engineered out of existence by the very companies "approving" them.

The Strategy for the Discerning

Stop chasing the "AI Stamp of Approval." It’s a lagging indicator of a vendor’s marketing budget, not a leading indicator of their tech’s longevity.

- Look for Infrastructure, not Overlays: The value is in the plumbing (NVIDIA, TSMC, and specialized networking) or the models themselves.

- Ignore the "Partnership" Press Release: A partnership between a $100B legacy tech firm and a $30B AI lab usually means the big firm is paying for the privilege of staying relevant for one more quarter.

- Bet on Latency: If a security solution makes the AI slower, it will eventually be replaced. No exceptions.

The market treats these partnerships as a marriage. In reality, it's a short-term lease. Anthropic is renting the reputation of these cyber stocks to calm their enterprise customers. As soon as the ink is dry on the next generation of self-correcting models, that lease will be terminated.

The "AI Stamp of Approval" is a signal to exit, not enter. Don't be the one holding the bag when the model learns to lock its own door.